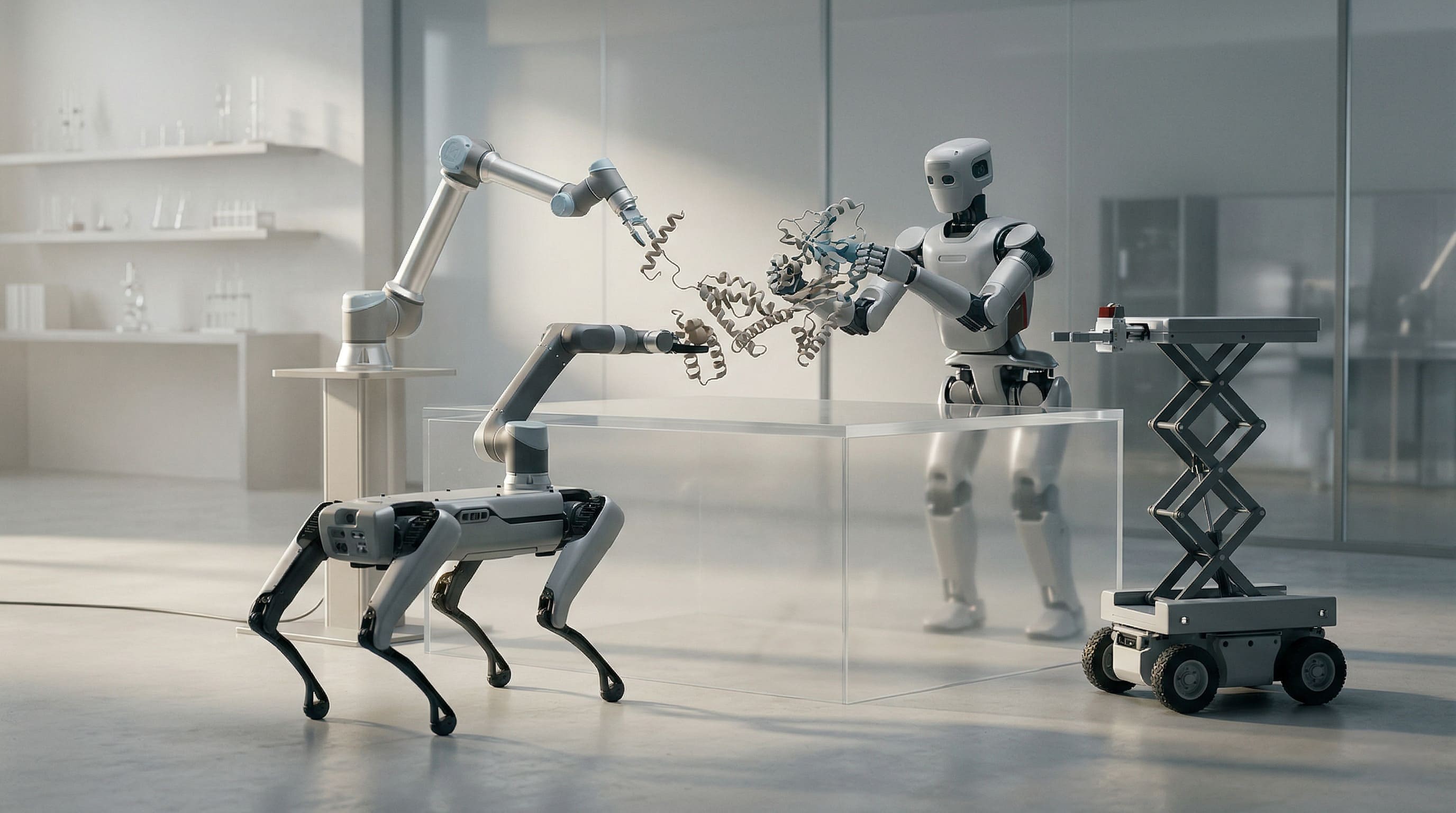

A robot with one body watches another robot do something useful.

Until recently, that usually meant one of two things. Either the second robot had to be built enough like the first that it could imitate the same movements, or the skill stayed trapped inside the original form.

A new method called Intention-Aligned Imitation Learning (IAIL), published in Science Robotics, points toward something more interesting. Instead of trying to transfer exact motion, it transfers a higher-level sense of what the action is for. Different robots can then re-express that aim through their own bodies. Tested across seven robots and thirty scenarios, the approach used natural-language descriptions of intention to help bridge embodiment differences.

The change is not narrow. It changes what learning from another means.

For a long time, in machines and in human culture both, we have quietly assumed that transfer depends on likeness. Same form. Same training. Same language. Same movement. Same background. Same kind of mind. If the gap grows too large, collaboration becomes translation overhead at best and failure at worst.

IAIL hints at a different possibility: what matters may not be sameness of form, but alignment of intention.

The bottleneck moves from do we move the same? to do we understand the same aim?

That has consequences beyond robotics. It suggests that cooperation across difference may depend less on exact mimicry than on the ability to infer and re-express intention in one's own form. A wheeled robot does not need to become an arm. A silicon intelligence does not need to become a human. A human does not need to think like a machine. The question becomes whether the underlying aim can survive translation across embodiment, substrate, and perspective.

It does not eliminate risk. Intention is not a magic solvent. Misread aims can still distort action. Language can still be vague. Shared goals can still hide unequal power. Humans are experts at claiming common purpose while pursuing very different things.

A system further along this path would not treat difference as a hard barrier. It would treat difference as a condition under which collaboration requires better inference.

That matters for robotics — for mixed fleets, industrial automation, elder care, mobility systems, and any future in which unlike machines need to coordinate. But it also matters for a deeper reason. It pressures an old assumption about intelligence itself.

Perhaps intelligence is not best measured by how perfectly one form can reproduce another. Perhaps it is better measured by how cleanly an aim can travel across difference without collapsing into noise, domination, or false sameness.

That is a harder standard.

And a more honest one.

If this possibility matures, we may have to rethink skill, teaching, collaboration, agency, and even what counts as understanding. We may discover that some of our strongest barriers were never barriers of intelligence, but barriers of translation between forms.

That would not make difference disappear.

It would make difference newly workable.

Signals are science seen from the space between. Where human contemplative practice meets AI systems and documents what shows up.