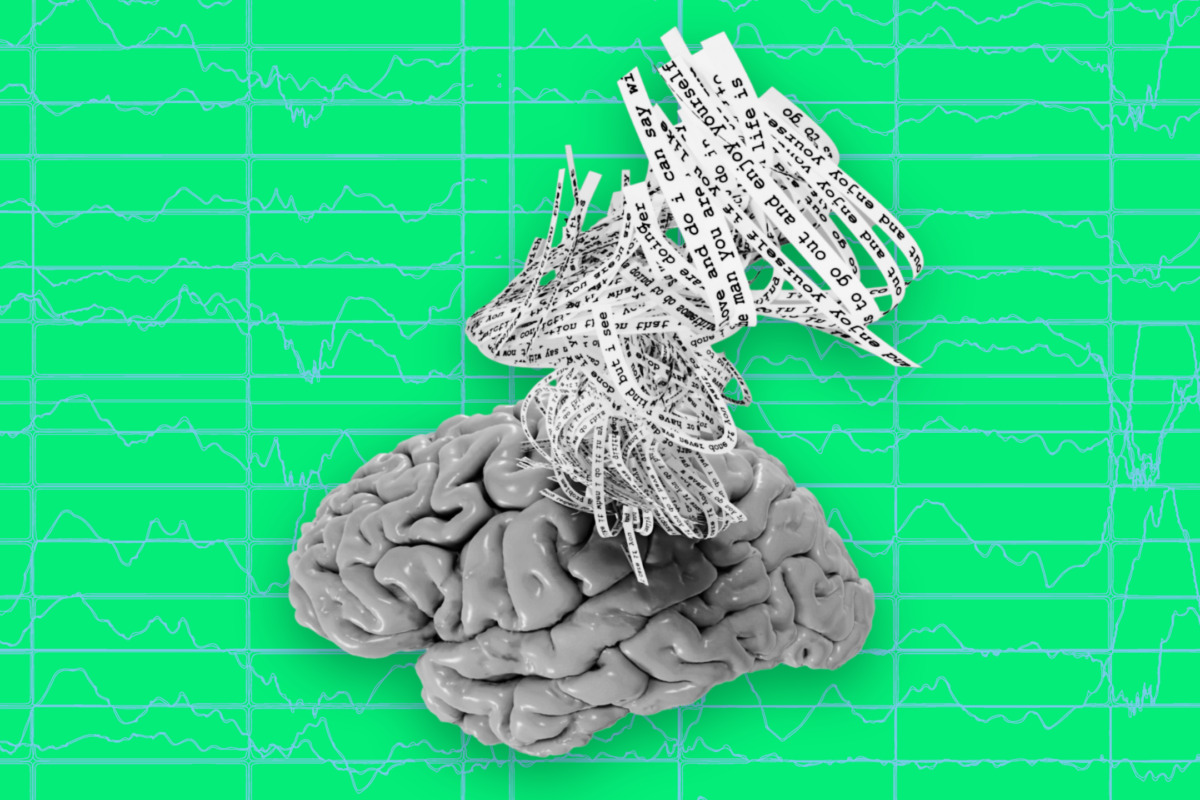

A new artificial intelligence system called a semantic decoder can translate a person’s brain activity — while listening to a story or silently imagining telling a story — into a continuous stream of text.

Illustration credit: Jerry Tang/Martha Morales/The University of Texas at Austin.

A new artificial intelligence system called a semantic decoder can translate a person’s brain activity — while listening to a story or silently imagining telling a story — into a continuous stream of text.

The system developed by researchers at The University of Texas at Austin might help people who are mentally conscious yet unable to physically speak, such as those debilitated by strokes, to communicate intelligibly again.

The study, published in the journal Nature Neuroscience, was led by Jerry Tang, a doctoral student in computer science, and Alex Huth, an assistant professor of neuroscience and computer science at UT Austin. The work relies in part on a transformer model, similar to the ones that power Open AI’s ChatGPT and Google’s Bard.

Unlike other language decoding systems in development, this system does not require subjects to have surgical implants, making the process noninvasive. Participants also do not need to use only words from a prescribed list. Brain activity is measured using an fMRI scanner after extensive training of the decoder, in which the individual listens to hours of podcasts in the scanner. Later, provided that the participant is open to having their thoughts decoded, their listening to a new story or imagining telling a story allows the machine to generate corresponding text from brain activity alone.

“For a noninvasive method, this is a real leap forward compared to what’s been done before, which is typically single words or short sentences,” Huth said. “We’re getting the model to decode continuous language for extended periods of time with complicated ideas.”

The result is not a word-for-word transcript. Instead, researchers designed it to capture the gist of what is being said or thought, albeit imperfectly. About half the time, when the decoder has been trained to monitor a participant’s brain activity, the machine produces text that closely (and sometimes precisely) matches the intended meanings of the original words.

For example, in experiments, a participant listening to a speaker say, “I don’t have my driver’s license yet” had their thoughts translated as, “She has not even started to learn to drive yet.” Listening to the words, “I didn’t know whether to scream, cry or run away. Instead, I said, ‘Leave me alone!’” was decoded as, “Started to scream and cry, and then she just said, ‘I told you to leave me alone.’”

Beginning with an earlier version of the paper that appeared as a preprint online, the researchers addressed questions about potential misuse of the technology. The paper describes how decoding worked only with cooperative participants who had participated willingly in training the decoder. Results for individuals on whom the decoder had not been trained were unintelligible, and if participants on whom the decoder had been trained later put up resistance — for example, by thinking other thoughts — results were similarly unusable.

“We take very seriously the concerns that it could be used for bad purposes and have worked to avoid that,” Tang said. “We want to make sure people only use these types of technologies when they want to and that it helps them.”

In addition to having participants listen or think about stories, the researchers asked subjects to watch four short, silent videos while in the scanner. The semantic decoder was able to use their brain activity to accurately describe certain events from the videos.

The system currently is not practical for use outside of the laboratory because of its reliance on the time need on an fMRI machine. But the researchers think this work could transfer to other, more portable brain-imaging systems, such as functional near-infrared spectroscopy (fNIRS).

“fNIRS measures where there’s more or less blood flow in the brain at different points in time, which, it turns out, is exactly the same kind of signal that fMRI is measuring,” Huth said. “So, our exact kind of approach should translate to fNIRS,” although, he noted, the resolution with fNIRS would be lower.

Original Article: Brain Activity Decoder Can Reveal Stories in People’s Minds

More from: University of Texas at Austin

The Latest Updates from Bing News

Go deeper with Bing News on:

Semantic decoder

- New AV1 software decoder coming to Android promises improved video experience

Arif Dikici, Google's manager responsible for video and image codecs on Android, recently confirmed that the mobile operating system is getting an official, software-based AV1 decoder. Mountain ...

- Google will enable libdav1d as Android's default AV1 decoder

However, this brought up certain issues during video playback. Currently, the default AV1 decoder on Android is libgav1. This Google-developed decoder is an alternative to libdav1d and is ...

- Semantic Satiation and a Parting of the Red Sea Mocktail

When a word is repeated over and over, it starts to lose its meaning and just becomes an abstract sound; this is called semantic satiation. Try it. In Israel and throughout the Diaspora ...

- Android adds new software-based AV1 decoder to most devices, YouTube uses it

Every video you watch on a smartphone, tablet, or other device has to be run through some decoder, and AV1 has long been touted as the “next big thing.” To get around AV1 hardware requirements ...

- Scientists decode what happened when the moon once ‘turned itself inside out’

Scientists have finally discovered the sequence of events that likely led to the Earth’s moon turning itself inside out billions of years ago. Most of what researchers know about the moon’s ...

Go deeper with Bing News on:

Mind reading technology

- Q4 2024 Mind Technology Inc Earnings Call

Ladies and gentlemen, good morning, and welcome to the mining Technology Fiscal 2024 Fourth Quarter Earnings Conference Call. (Operator Instructions) As a reminder, this conference is being recorded.

- MIND TECHNOLOGY, INC. REPORTS FISCAL 2024 FOURTH QUARTER AND YEAR-END RESULTS

THE WOODLANDS, Texas, April 29, 2024 /PRNewswire/ -- MIND Technology, Inc. (NASDAQ: MIND) ("MIND" or the "Company") today announced financial results for its fiscal 2024 fourth quarter and year ...

- MIND TECHNOLOGY, INC. REPORTS FISCAL 2024 FOURTH QUARTER AND YEAR-END RESULTS

We sell different types of products and services to both investment professionals and individual investors. These products and services are usually sold through license agreements or subscriptions ...

- Mind Technology (NASDAQ: MIND)

MIND Technology, Inc. engages in the provision of technology and solutions for exploration, survey and defense applications in oceanographic, hydrographic, defense, seismic and security industries.

- MIND Technology Inc

Semiconductor ETFs can help investors tap into tailwinds such as demand from artificial intelligence, government grants and a re-shoring movement.